Key Highlights

- AI adoption is slowing not due to capability, but due to fear of losing control in decision-making.

- Human-in-the-Loop ensures control and trust but limits speed and scalability.

- Fully autonomous AI enables speed and scale but increases risk and reduces transparency.

- Most real-world workflows require a mix of both, not an extreme choice.

- Leading organizations combine autonomy for routine tasks with human oversight for exceptions.

- Control should be based on risk, system maturity, data reliability, and decision complexity.

- The most effective approach is a phased shift from human validation to autonomous execution.

- Long-term success comes from designing systems where control evolves with performance and business needs.

AI adoption across manufacturing and retail is entering a new phase. Organizations are no longer experimenting with automation in isolated workflows. They are embedding intelligent systems into core operations such as supply chain planning, pricing, inventory management, and customer experience.

This shift is not just about technology. It is about control. On one side, there is a push toward full autonomy, driven by the need for speed, scale, and efficiency. On the other, there is hesitation. Leaders are not just asking whether AI works. They are asking what happens when it works without oversight.

This is not a debate about capability. It is a decision about how control should be structured as systems scale.

Understanding Human-in-the-Loop and Fully Autonomous AI

Before comparing both approaches, it is important to move beyond definitions and understand how they operate in practice.

Human-in-the-Loop (HITL)

Human-in-the-Loop systems are designed to keep humans actively involved in decision-making, even when AI is doing most of the analytical work.

In these systems, AI does not replace decision-making. It supports it. The system processes data, identifies patterns, and generates recommendations. However, the final decision remains with a human operator. This creates a workflow where AI enhances speed and insight, while humans provide judgment and oversight.

In manufacturing, this often appears in quality control systems where AI detects defects, but human operators validate final outputs. In retail, it can be seen in pricing or promotional strategies where AI suggests changes, but business teams approve them.

The core strength of this model lies in control. Organizations retain visibility into decisions and can intervene when necessary. It also allows teams to build trust gradually, as they can observe how AI performs before increasing its autonomy.

At the same time, this model introduces friction. Every decision that requires human validation slows down execution. As the volume of decisions increases, this becomes a bottleneck.

Fully Autonomous AI

Fully autonomous systems operate with minimal or no human intervention in day-to-day decision-making.

These systems are designed to:

- Interpret inputs

- Make decisions

- Execute actions

- Monitor outcomes

All within a continuous loop. In retail, this may involve systems that automatically adjust pricing based on demand patterns, competitor activity, and inventory levels. In manufacturing, it can include systems that optimize production schedules or manage supply chain routing in real time.

The advantage of this approach is speed and scalability. Decisions are made instantly, and systems can operate continuously without human constraints. However, autonomy also introduces complexity.

When decisions are made independently, organizations must rely entirely on system design, data quality, and monitoring frameworks. If something goes wrong, identifying and correcting the issue becomes more difficult. This model works best when workflows are predictable and well-defined. In more complex or ambiguous environments, it can struggle. Now that both models are clearly understood, the next step is to compare how they differ in real operational terms.

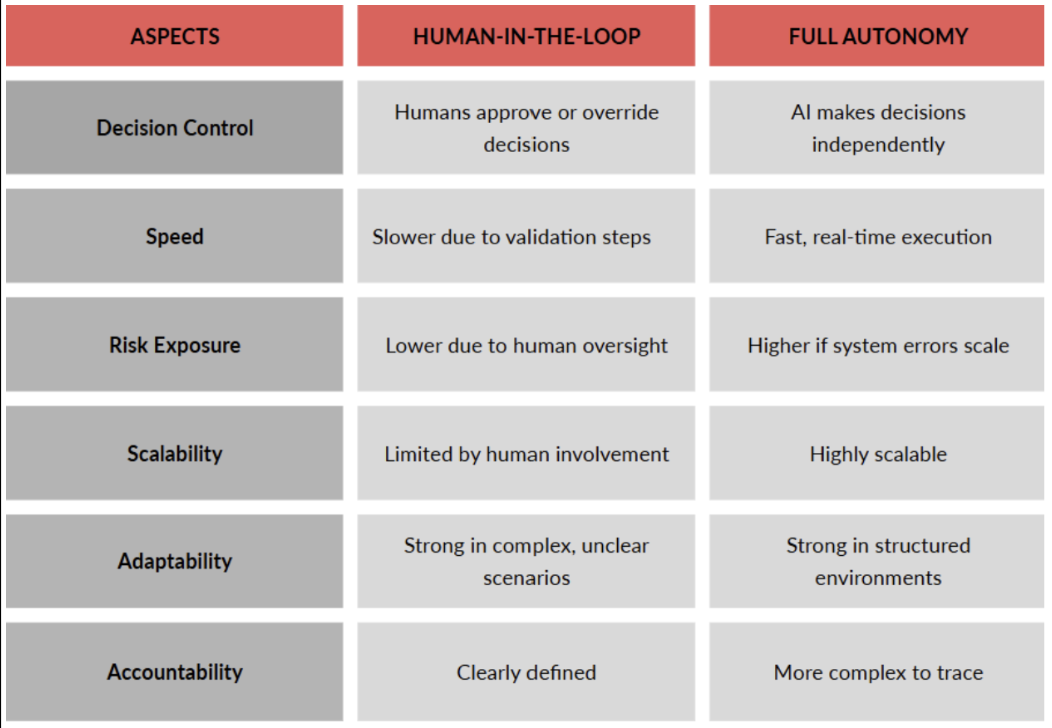

Key Differences That Impact Decision-Making

These differences are not just technical. They directly impact how organizations operate, scale, and manage risk. To make a practical decision, it is important to understand not just the differences, but where each approach works well and where it falls short.

Strengths and Limitations of Each Approach

Human-in-the-Loop: Where It Works Well and Where It Struggles

Strengths

Human-in-the-Loop systems are highly effective in environments where decisions require context, judgment, and interpretation.

They allow organizations to:

- Maintain control over critical decisions

- Reduce risk in high-impact scenarios

- Build trust in AI systems gradually

- Handle ambiguous or incomplete data effectively

In industries like manufacturing and retail, this is particularly valuable in areas such as quality control, supplier negotiations, and customer experience management. Another key advantage is accountability. When humans are part of the decision loop, responsibility is clear, and organizations can manage outcomes more effectively.

Limitations

However, these systems face several challenges. The most significant limitation is scalability. As decision volume increases, human involvement becomes a constraint. This limits the ability to operate at scale and reduces efficiency gains.

There is also a dependency on human consistency. Different individuals may interpret the same situation differently, leading to variability in outcomes. In addition, decision cycles become slower. In time-sensitive environments, delays can impact performance.

Finally, over-reliance on human validation can reduce the incentive to improve system autonomy, keeping organizations stuck in semi-automated states.

Fully Autonomous AI: Where It Delivers and Where It Fails

Strengths

Fully autonomous systems excel in environments where speed, scale, and consistency are critical.

They enable organizations to:

- Process large volumes of data in real time

- Make decisions instantly

- Operate continuously without human intervention

- Reduce operational costs over time

This makes them ideal for use cases such as inventory optimization, demand forecasting, and dynamic pricing. They also eliminate human bias in repetitive decision-making processes, leading to more consistent outcomes.

Limitations

Despite these advantages, autonomy comes with significant challenges. The biggest concern is risk. When systems operate independently, errors can scale quickly before being detected.

Another limitation is lack of contextual understanding. AI systems may struggle in situations that require human judgment or nuanced interpretation. There is also the issue of transparency. Autonomous systems can be difficult to explain, making it harder for leaders to trust their decisions.

Additionally, governance becomes more complex. Organizations must establish strong monitoring and control mechanisms to manage system behavior. Finally, transitioning to full autonomy requires significant changes in workflows, infrastructure, and organizational mindset. These strengths and limitations make it clear that neither approach is universally better. The right choice depends on the nature of the problem being solved.

When to Use Human-in-the-Loop vs Full Autonomy

The decision between these models should be based on the characteristics of the workflow rather than the capabilities of the technology.

Human-in-the-Loop is more suitable when:

- Decisions carry high financial or operational risk

- Context and judgment are critical

- Data is incomplete, inconsistent, or rapidly changing

- Regulatory or compliance requirements are involved

In manufacturing, this often applies to quality assurance and supplier decision-making. In retail, it applies to customer experience and strategic pricing decisions.

Full autonomy is more effective when:

- Workflows are stable and repeatable

- Decisions are high-volume and time-sensitive

- Data is structured and reliable

- Speed has a direct impact on performance

Examples include automated replenishment systems in retail or production scheduling in manufacturing. However, most real-world workflows are not purely one or the other. They contain elements of both. This is why leading organizations are moving toward a more balanced approach rather than choosing a single model.

How Leading Organizations Combine Both Approaches

Instead of choosing between human control and full autonomy, many organizations are designing systems that combine both. A more practical way to think about this is not "Human-in-the-Loop vs Autonomy," but "where humans intervene."

In these systems:

- AI handles routine, high-volume decisions

- Humans step in for exceptions, edge cases, or high-risk scenarios

This creates a layered model where efficiency and control coexist.

For example, in retail operations:

- AI may automatically manage inventory replenishment

- Humans review anomalies such as unexpected demand spikes or supply disruptions

In manufacturing:

- AI may optimize production schedules

- Humans intervene when constraints or disruptions occur

This approach allows organizations to:

- Scale operations efficiently

- Maintain control over critical decisions

- Continuously improve system performance

Over time, as systems become more reliable, the level of human intervention can be reduced. This naturally leads to the most important question for leadership teams. Where should control actually sit in these systems?

Deciding Where Control Should Sit

Deciding where control should sit is one of the most critical decisions in AI deployment, and it is often where organizations struggle the most. Many either over-control systems, limiting their value, or over-automate too early, increasing risk.

The right approach is not to choose a fixed level of control, but to design control as a dynamic element within the system.

Risk exposure: Not all decisions carry the same level of consequence. In manufacturing, a minor scheduling adjustment may be low risk, while a change in production configuration could have significant impact. In retail, adjusting pricing for a low-demand product is very different from making changes to high-revenue categories. Control should be directly proportional to risk. High-impact decisions require stronger oversight, especially in early stages of deployment.

System maturity: New AI systems should not be given full autonomy immediately. At early stages, models are still learning, edge cases are not fully understood, and performance is less predictable. Human oversight acts as a safety layer during this phase. As systems mature and demonstrate consistent performance, control can gradually shift toward autonomy. This transition should be based on evidence, not assumption.

Data reliability: AI systems are only as good as the data they operate on. In environments where data is incomplete, delayed, or inconsistent, human involvement becomes essential. In contrast, when data pipelines are stable and reliable, autonomy becomes more viable.

Decision complexity: Some decisions are straightforward and rule-based, while others require interpretation, trade-offs, and contextual understanding. Complex decisions are less suited for full autonomy, especially in dynamic environments.

Another important consideration is organizational readiness. Even if the technology supports autonomy, teams must be comfortable with it. Trust plays a major role. If stakeholders do not trust the system, they will override it, reducing its effectiveness.

This is why many organizations adopt a phased approach:

- Start with Human-in-the-Loop for validation

- Move to Human-on-the-Loop for monitoring

- Transition to autonomy with exception handling

This progression allows control to evolve alongside system capability. A critical mistake leaders make is treating control as a binary decision. In reality, control should be distributed across the workflow, with different levels of oversight applied at different stages.

For example, in a retail supply chain:

- Forecasting may be fully automated

- Allocation decisions may require validation

- Exception handling may be human-driven

This layered control ensures both efficiency and reliability. Ultimately, deciding where control should sit is about aligning technology capability with business risk and operational reality. Organizations that get this right create systems that are both scalable and trustworthy. With a clear understanding of how control should be designed, the final step is to step back and look at what this means for leaders making long-term AI decisions.

Conclusion

The discussion around Human-in-the-Loop and full autonomy is often framed as a choice between control and efficiency. In reality, it is a question of how control should evolve as systems grow in complexity and scale.

Organizations that succeed are not the ones that automate the fastest or retain the most control. They are the ones that design systems where control is intentional, flexible, and aligned with risk, data reliability, and decision complexity.

In the early stages, human involvement plays a critical role in building trust and ensuring stability. As systems mature, control shifts from direct intervention to monitoring, exception handling, and strategic oversight. This transition is where most organizations struggle, either moving too quickly toward autonomy or remaining dependent on manual validation for too long.

At Finzarc, this is where execution becomes critical. The focus is not on choosing between human involvement and autonomy, but on designing systems where both operate together effectively. By structuring workflows, defining ownership, and connecting decision-making with real-time signals, control becomes part of the system rather than a constraint on it.

For leaders, the shift is clear. The goal is not to replace human decision-making or to automate everything. It is to build systems that can scale decisions while maintaining trust and accountability.

The real advantage lies in designing systems where control evolves with performance, enabling both speed and reliability over time.