Key Highlights

- AI agents behave differently in production because real-world inputs are unpredictable.

- Most organizations are deploying AI agents, but fail to scale them or enable continuous learning in production.

- The real gap is operational, not technical — success depends on systems that drive ongoing improvement, not just deployment.

- Most agents don't improve after deployment because learning systems are missing.

- Evaluation methods don't match real-world conditions, so performance issues go unnoticed.

- Feedback from users and systems exists but is rarely captured or used properly.

- Retraining is not linked to actual performance problems, so improvements are inconsistent.

- System design (memory, tools, workflows) impacts performance more than just the model.

- Static guardrails limit adaptability and do not support continuous learning.

- Real value comes from building systems that help agents learn continuously in production.

AI agents are often evaluated in controlled environments where everything is predictable. Inputs are clean, datasets are curated, and success is measured against predefined benchmarks. In these conditions, performance appears stable and reliable. However, production environments operate under completely different realities.

Once deployed, AI agents begin interacting with real users, real workflows, and constantly changing data. Inputs become inconsistent, context varies across use cases, and unexpected scenarios emerge frequently. Unlike testing environments, where edge cases are simulated, production systems face them continuously. This is where the expectation changes. AI agents are no longer expected to simply execute tasks correctly. They are expected to adapt, improve, and learn from ongoing interactions.

In theory, production environments should be the richest source of learning. Every interaction generates signals. Every failure exposes a gap. Every correction provides an opportunity to improve. In practice, most organizations fail to convert this into structured learning.

While enterprises are increasingly capable of deploying AI agents, they struggle to design systems that allow those agents to evolve after deployment. Agents continue operating based on initial training, even as real-world conditions shift.

The issue is not a lack of data. Production environments generate more data than any testing system ever could. The issue is that this data is not captured, structured, or used effectively. This creates a gap between deployment and improvement. Systems function, but they do not evolve in proportion to the complexity of the environment they operate in. To understand why this happens, it is necessary to examine what actually changes when AI agents move from development into production environments.

The Data Behind The Execution Gap

The gap between deploying AI agents and actually improving them in production environments is becoming more pronounced, and recent data clearly shows where the breakdown occurs. Organizations are investing heavily in AI capabilities, experimenting with use cases, and deploying agents into real workflows. Yet, when it comes to sustained improvement and measurable impact, most systems fall short.

The issue is not access to technology or lack of experimentation. The issue lies in what happens after deployment, specifically, how learning is (or is not) managed in production environments.

1. Scaling remains the biggest constraint.

According to McKinsey & Company (State of AI, 2025), nearly two-thirds of organizations have not yet scaled AI across the enterprise. Most initiatives remain confined to pilot stages or isolated use cases. This has direct implications for production learning. When AI agents are not deeply integrated into core workflows, they are not exposed to the full range of real-world variability. As a result, they do not encounter enough diverse scenarios to improve meaningfully. Scaling is not just about expansion. It is about creating the conditions under which learning can happen continuously.

2. Value creation is highly concentrated among a small group of companies.

Research from Boston Consulting Group (2025) shows that only about 5% of companies are generating significant value from AI. This is a critical signal. It suggests that while many organizations are building and deploying AI systems, very few are able to translate that into consistent performance improvement. The difference between these high-performing organizations and the rest is not necessarily better models, but better systems around those models. These organizations are more likely to have structured feedback loops, continuous evaluation mechanisms, and clearly defined processes for improving performance in production.

3. Impact breaks at the enterprise level.

Even when individual use cases succeed, they rarely translate into organization-wide impact. McKinsey reports that only 39% of organizations see measurable EBIT impact from AI. This highlights a structural disconnect. Localized success does not automatically scale into systemic performance. In the context of production learning, this means that even if an AI agent performs well in one workflow, that learning is not propagated across the system. Each deployment operates in isolation, limiting the overall impact of AI initiatives.

4. Adoption of AI agents is accelerating rapidly.

According to Deloitte (2025 Predictions Report), 25% of enterprises using generative AI are expected to deploy AI agents in 2025, increasing to 50% by 2027. This rapid adoption creates urgency. More agents will enter production environments, interacting with more users and more complex workflows. However, without systems that enable continuous learning, this increased deployment will not automatically translate into better performance. Instead, organizations risk scaling systems that remain static.

5. How organizations approach AI objectives.

McKinsey & Company notes that while 80% of organizations prioritize efficiency as a primary goal for AI, the companies generating the most value also focus on growth and innovation. This distinction directly impacts how systems evolve in production. Systems designed only for efficiency tend to optimize existing workflows but remain static. In contrast, systems designed for growth are more likely to incorporate feedback, adapt to changing conditions, and improve over time.

Taken together, these data points point in the same direction. Organizations are not struggling to build AI agents. They are struggling to create the conditions under which those agents can learn continuously in production environments. The gap between potential and performance is not a technology gap. It is an operational gap.

As more agents are deployed into real-world systems, this gap will become even more visible. The competitive advantage will not come from deploying AI faster, but from building systems that allow AI to improve consistently over time. To understand how this gap plays out in practice, the next step is to look at what actually happens to AI agents once they are deployed into production environments.

What Actually Happens to AI Agents After Deployment

1. Performance Becomes Dynamic, But Evaluation Remains Static

When AI agents are deployed into production, their operating conditions change immediately. Instead of dealing with controlled inputs, they now process real-world data that is inconsistent, incomplete, and often ambiguous.

However, the way organizations evaluate these systems often does not change.

Most evaluation frameworks are designed for development environments. They rely on test datasets, predefined scenarios, and static benchmarks. These methods are useful for validating initial performance, but they are insufficient for measuring real-world effectiveness.

In production:

- Inputs vary significantly across users and contexts

- Edge cases appear more frequently than expected

- System behavior must adapt to changing conditions

Without continuous evaluation mechanisms, organizations lack visibility into how the system is actually performing.

For example, an AI agent handling customer queries may perform well on standard requests but fail in cases involving ambiguous language or new product categories. If these failures are not tracked systematically, the organization assumes the system is functioning correctly.

Over time, this creates hidden performance gaps. The core issue is that evaluation remains static while the environment becomes dynamic. This disconnect prevents meaningful learning.

2. Feedback Exists Everywhere, But Is Rarely Structured

Every production interaction generates feedback. This feedback may not always be explicit, but it exists in multiple forms:

- Users correcting the agent's response

- Tasks being escalated to human teams

- Partial task completions

- Repeated queries for the same issue

These signals are extremely valuable because they reflect real-world performance. However, in most organizations, this feedback is not structured or captured systematically.

Instead:

- Feedback remains embedded in logs or conversation data

- No mechanism exists to categorize or prioritize it

- It is not linked to improvement pipelines

For instance, if a support agent consistently fails to resolve a certain type of query, that pattern may be visible in interaction logs. But unless there is a system to extract and analyze that pattern, it does not translate into learning.

This creates a situation where production generates insights, but those insights are not operationalized. Without structured feedback loops, the system is exposed to learning opportunities but cannot act on them.

3. Retraining Is Disconnected from Real Performance

Retraining is often assumed to be the primary way AI systems improve. However, in production environments, retraining is frequently disconnected from actual system behavior.

In many cases:

- Retraining happens on a fixed schedule

- Updates are driven by new data availability

- Model improvements are tested in isolation

What is missing is a direct connection between observed performance issues and retraining decisions.

For example, if an AI agent begins to misinterpret certain types of inputs, there should be a clear process that:

- Identifies the issue

- Collects relevant examples

- Updates the model or system

- Validates the improvement

In practice, this loop is often incomplete. As a result:

- Systems continue to operate with known weaknesses

- Updates may not address the most critical issues

- Improvements are inconsistent

Effective learning requires retraining to be triggered by real-world signals, not arbitrary schedules.

4. System Design Limits Learning More Than Data

A common assumption is that improving AI agents is primarily about improving models. While models are important, production performance is heavily influenced by system design.

Modern AI agents are composed of multiple components:

- Decision-making logic

- Tool integrations

- Memory systems

- Execution workflows

Each of these components affects how the agent behaves.

For example:

- An agent without memory may fail to learn from past interactions

- Poor tool integration can limit the agent's ability to act effectively

- Inefficient planning logic can lead to suboptimal decisions

This means that even if the model is improved, the overall system may still underperform. Production learning, therefore, requires changes at the system level, not just the model level. Organizations that focus only on retraining often miss the larger opportunity to improve how the system operates.

5. Guardrails Control Behavior but Do Not Enable Learning

Guardrails are necessary in production environments to ensure safety, compliance, and reliability. They define boundaries within which the agent can operate. However, most guardrails are static.

They are designed to:

- Prevent certain actions

- Restrict outputs

- Enforce predefined rules

While this reduces risk, it also limits adaptability. More importantly, guardrails are rarely integrated into learning systems. They do not evolve based on:

- New types of errors

- Changing user behavior

- Emerging edge cases

For example, if a new type of risky scenario emerges, the system may not adjust its guardrails unless manually updated. Without adaptive guardrails, organizations cannot scale learning without increasing risk.

What This Means for Enterprise Tech Teams

These patterns fundamentally change how enterprise teams should think about AI agents in production.

Deployment should no longer be seen as the final milestone. It is the beginning of a continuous process where the system must be monitored, evaluated, and improved over time. Organizations that treat deployment as completion often find that performance stagnates or declines.

Learning must become an operational function. Just as teams manage infrastructure, uptime, and security, they must also manage how AI systems evolve. This includes setting up processes for evaluation, feedback collection, and system updates.

Ownership becomes critical. In many enterprises, responsibility for AI systems is distributed across multiple teams. Without clear ownership of learning outcomes, no team is accountable for ensuring improvement.

Example: Consider an enterprise deploying an AI agent for internal IT support. Initially, the system performs well because it is trained on historical queries. Over time, new tools are introduced, workflows change, and new types of issues emerge. Without structured learning mechanisms, the agent continues to operate based on outdated knowledge, leading to increased escalations and reduced trust.

Finally, leaders must recognize that production environments are dynamic. Systems that do not adapt will gradually become misaligned with business needs. This brings us to the next question. Even if organizations understand the need for learning, what challenges prevent them from building effective systems?

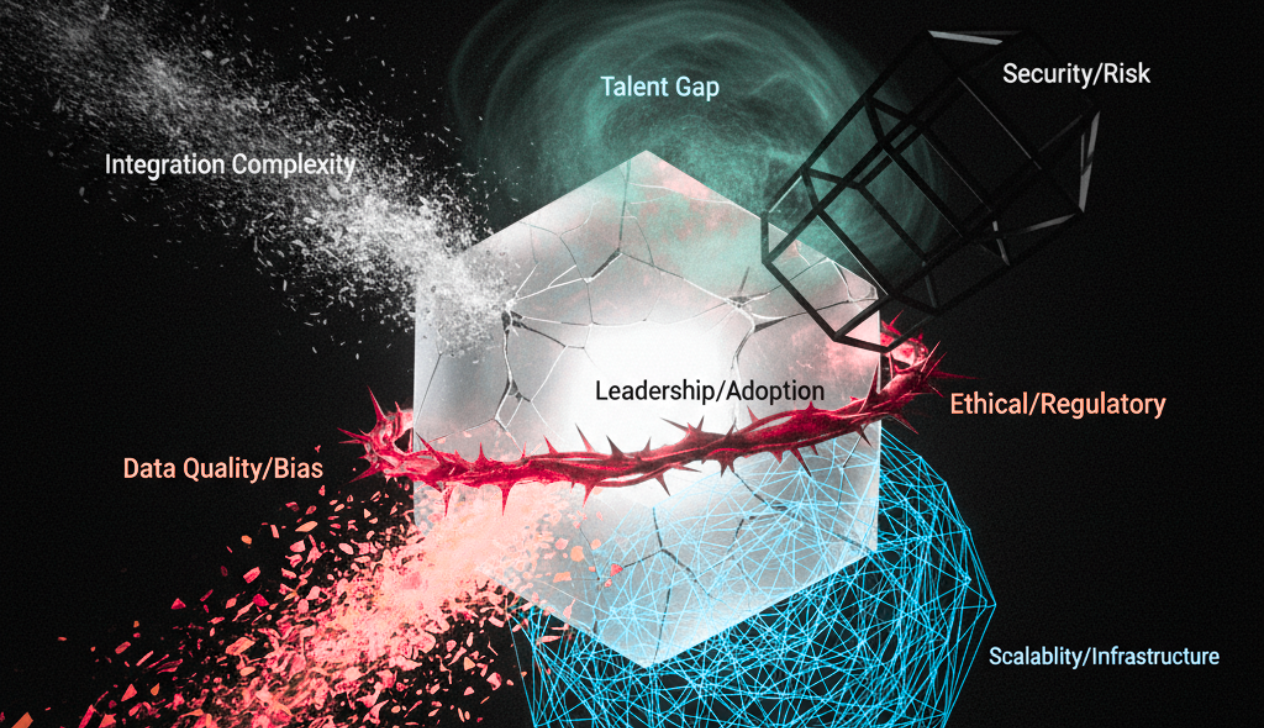

Key Limitations in Building Production Learning Systems

Building learning systems in production environments is inherently complex, and several constraints make this process challenging.

Absence of standardized evaluation frameworks: While model accuracy can be measured in controlled settings, there is no widely accepted method for evaluating performance across diverse real-world scenarios. This makes it difficult to benchmark performance or track improvements consistently.

Data fragmentation: In large organizations, data is distributed across multiple systems. Feedback signals may exist in logs, CRM systems, or operational dashboards, but they are rarely unified. This fragmentation makes it difficult to build a complete view of system performance.

Trade-off between stability and adaptability: Frequent updates can improve performance, but they can also introduce risk. In enterprise environments, especially those involving critical operations, stability is essential. Organizations must balance the need for improvement with the need for reliability.

Governance requirements add another layer of complexity: Changes to AI systems often require approvals, testing, and compliance checks. These processes slow down learning cycles and make it harder to respond quickly to new conditions.

Capability gap: Building and managing learning systems requires expertise in data engineering, system design, and AI operations. Many organizations are still developing these capabilities.

How to Build Learning Systems for AI Agents in Production

Building effective learning systems requires a structured approach that integrates evaluation, feedback, and system improvement.

To establish continuous evaluation: Organizations need to monitor how AI agents perform in real-world conditions. This involves tracking outcomes, identifying patterns of failure, and understanding how performance changes over time. Continuous evaluation provides the foundation for all learning activities.

To design structured feedback loops: Feedback must be captured systematically and transformed into actionable insights. This includes identifying relevant signals, categorizing them, and linking them to improvement processes. Without this structure, feedback remains unused.

To align retraining with performance signals: Retraining should be triggered by specific conditions, such as declining performance or the emergence of new use cases. This ensures that updates are meaningful and targeted.

To improve system architecture: Enhancing components such as memory, planning, and tool integration can significantly improve performance. In many cases, these improvements have a greater impact than retraining alone.

To develop adaptive guardrails: Guardrails should evolve based on system behavior and feedback. This allows organizations to maintain control while enabling continuous improvement.

How to Build AI That Actually Evolves

AI agents do not automatically improve once they move into production. Without the right system design, most of them plateau. Production environments generate continuous signals, but those signals only matter if they are captured, structured, and fed back into the system.

The real challenge in enterprise AI is the improvement gap. Most organizations invest heavily in building and deploying agents, but far fewer invest in the infrastructure required to support continuous learning. The result is predictable. Systems perform well initially but fail to adapt as real-world complexity increases.

At Finzarc, this is where execution changes the outcome. Learning is treated as an operational capability, not a separate initiative. Systems are designed to connect evaluation, feedback, and workflows so that improvement happens continuously as part of daily operations.

For enterprise leaders, the shift is clear. The value of AI in production is not defined by how it performs at launch, but by how effectively it adapts over time. Systems that learn continuously will outperform those that simply execute.