Key Highlights

- Enterprise AI spend hit $37B in 2025, yet only 28% of use cases deliver ROI.

- IDC projects CIOs will underestimate AI infrastructure costs by 30% through 2027.

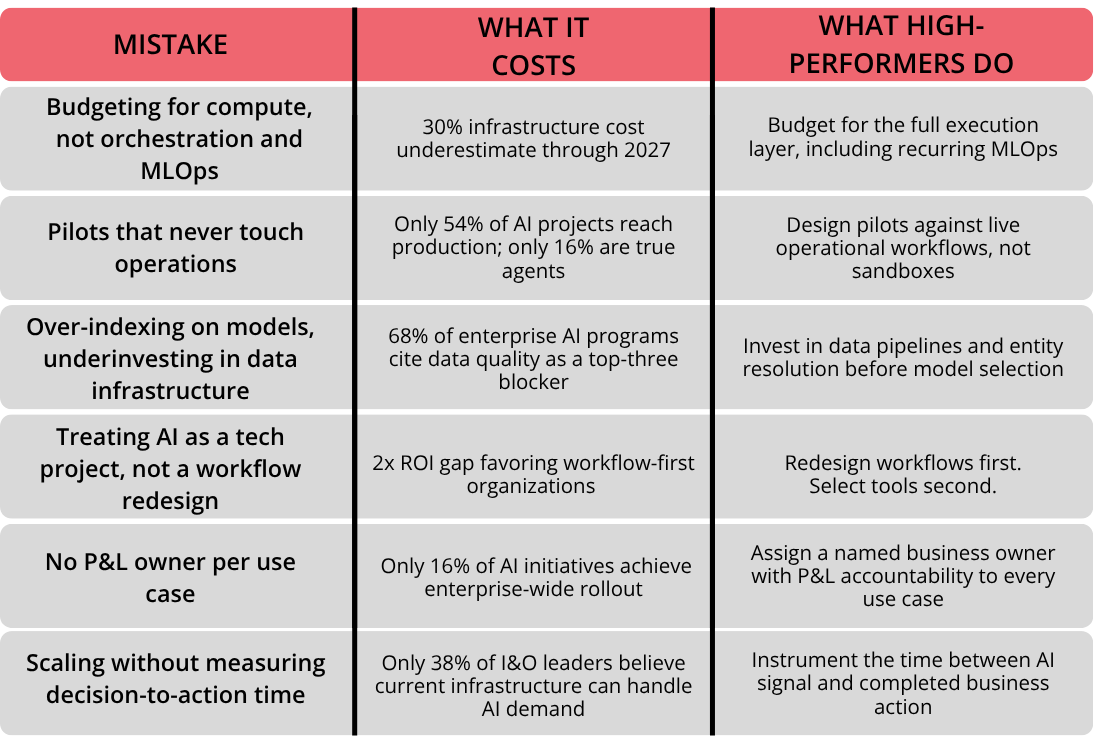

- Six predictable mistakes from compute-only budgets to missing P&L owners are killing AI ROI in 2026.

- McKinsey found organizations that redesign workflows before selecting AI tools are 2x more likely to capture ROI.

- Do not add more tools. Fix how decisions move.

Enterprise AI spending hit $37 billion in 2025, up from $1.7 billion just two years earlier. Gartner projects global AI spending will cross $2.52 trillion in 2026, a 44% year-over-year increase. And yet, only 28% of AI use cases in infrastructure and operations fully deliver the ROI leaders expected, according to a Gartner survey of 782 I&O leaders conducted in late 2025.

The gap between spend and return is widening, not closing. Boards know it. CFOs are starting to ask pointed questions. According to a Harris Poll survey commissioned by Dataiku, 98% of tech leaders now face increased pressure to demonstrate AI ROI, and 71% of CIOs believe their AI budget will face cuts or a freeze if targets are missed by mid-2026.

Here is the uncomfortable truth the research makes clear: most organizations do not have an AI problem. They have an execution problem. The infrastructure is being built for experimentation, not for operational decisions. The mistakes are predictable, well-documented, and repeating across industries.

This research brief is our answer. We reviewed twelve major reports published between November 2025 and April 2026 to understand one question: why does AI spending keep rising while ROI keeps lagging? This covers the six infrastructure mistakes the data consistently points to.

How We Built This View

This brief draws on primary research from Gartner's April 2026 I&O survey (782 I&O leaders), Menlo Ventures' State of Generative AI in the Enterprise 2025 (nearly 500 US enterprise decision-makers), MIT's NANDA GenAI Divide study, McKinsey's State of AI, November 2025, IDC infrastructure cost projections, IBM Institute for Business Value research, Flexential's 2025 State of AI Infrastructure, Netskope's infrastructure readiness survey, the Fujitsu Technology and Service Vision 2025, Databricks enterprise AI research, and analysis published in CIO, The Register, TechTarget, and the National CIO Review.

All cited statistics are from 2024 onward. When reports disagreed, we kept the tension instead of forcing a cleaner story. Real operations are messy. The data should reflect that.

The Six Infrastructure Mistakes Killing AI ROI

Mistake 1: Budgeting for Compute, Not for Orchestration

The most expensive line item in an AI infrastructure budget is rarely the GPU. It is the engineering effort required to get model outputs into live workflows.

IDC projects that Global 1,000 companies will underestimate their AI infrastructure costs by 30% through 2027. The reason, according to IDC's VP of infrastructure research, is that AI spending behaves differently from traditional IT: usage expands quickly once models are introduced, a workflow designed for one team becomes a shared service, and compute becomes probabilistic rather than predictable.

Hidden engineering costs compound this. TechTarget documents a financial services firm that built an intelligent document processing system using hyperscaler tools. A three-year total cost of ownership analysis revealed more than $1.5 million in technical labor and infrastructure — more than three times the cost of a purpose-built platform.

Beyond orchestration, there is the ongoing MLOps cost — model drift detection, retraining pipelines, monitoring, and data pipeline maintenance. Production models are not static. Data patterns shift, user behavior changes, and performance degrades over time if not actively monitored and retrained. Gartner projects that 70% of enterprises will operationalize AI architectures through MLOps by 2025 precisely because ad-hoc model management does not scale. Most AI infrastructure budgets capture the training cost once — not the recurring operational cost that keeps the system delivering output the business can trust.

Why this mistake is structural: AI infrastructure budgets are built from the compute stack upward. They ignore the orchestration, retry logic, human-in-the-loop controls, and workflow connectors that turn an inference result into a business action, and they ignore the ongoing MLOps investment required to keep that result trustworthy over time. This is the debt nobody puts on the P&L. It is where the 30% cost miss lives.

Mistake 2: Running Pilots That Never Touch Operations

The most-cited AI statistic of the past year is MIT NANDA's finding that 95% of enterprise AI pilots show no measurable bottom-line impact despite an estimated $30 to $40 billion in US enterprise AI investment in 2024. Menlo Ventures' data is slightly more optimistic but converges on the same structural issue: only 16% of enterprise AI deployments qualify as "true agents" with planning, tool use, and autonomous execution. The rest are fixed-sequence prompt workflows that never leave the pilot sandbox.

Flexential's 2025 State of AI Infrastructure report found that only 54% of AI projects successfully transitioned from pilot to production in 2025. The remainder are stuck in what industry analysts now call "pilot purgatory."

Why this mistake is structural: A pilot is not an infrastructure investment. A pilot is a demo. Infrastructure is load-bearing. The moment a pilot tries to serve real operational decisions, it runs into the orchestration debt from Mistake 1, exposes the data debt in Mistake 3, and stalls at the workflow redesign in Mistake 4. Pilots do not fail because the model is bad. They fail because the operating system around the model was never built.

Mistake 3: Over-Indexing on Models, Underinvesting in Data Infrastructure

A 2025 Databricks study found that 68% of enterprise AI initiatives cite data quality as a top-three blocker, yet investment in data infrastructure continues to lag investment in AI tooling.

Gartner's I&O survey corroborates this: 38% of failures in AI projects are attributed to persistent skill gaps, with an equal 38% attributed to poor data quality or limited data availability.

The Fujitsu Technology and Service Vision 2025 puts a sharper point on the scale: 80 to 90% of enterprise data remains unstructured, and most AI is being deployed on top of that rather than alongside a disciplined effort to clean it.

Why this mistake is structural: Models are visible. Data pipelines are not. Executives can see a model demo. They cannot see whether the retrieval layer is pulling from stale documents, whether entity resolution across CRM, ERP, and data warehouse is stable, or whether the same product SKU carries three different IDs in three different systems. AI does not fix broken data infrastructure. It magnifies it, confidently.

Mistake 4: Treating AI as a Tech Project, Not a Workflow Redesign

This is where most organizations know the answer and still avoid it.

McKinsey's November 2025 research found that organizations reporting significant financial returns from AI are significantly more likely to have redesigned workflows before selecting AI tools. This inverts the usual sequence. Most organizations choose the tool first and redesign the workflow around it as a consequence.

Gartner's I&O survey reinforces the pattern: 33% of successful AI leaders attribute their success to embedding AI into existing systems and processes people already use. Among the 57% of leaders reporting failures, the most common cause cited was unrealistic expectations that AI would immediately automate complex tasks or fix long-standing operational issues.

Why this mistake is structural: AI exposes structural friction. It does not remove it. If decision rights are unclear, AI makes the ambiguity worse. If incentives are misaligned, AI accelerates the misalignment. Productivity gains at the task level do not automatically translate to margin expansion at the enterprise level. A workflow that was broken before AI will be broken, faster, after AI.

Mistake 5: No Accountable P&L Owner per Use Case

IBM's Institute for Business Value found that only 25% of AI initiatives meet ROI expectations, and only 16% achieve enterprise-wide rollout. The common thread in the failures: no accountable business owner.

Asana's CIO captured the pattern in a recent CIO.com analysis: many organizations start with models and pilots rather than business outcomes, running demos in isolation without redesigning the underlying workflow or assigning a P&L owner. Gartner reports that 26% of successful I&O leaders had full executive support for their use case, and 25% could count on cross-functional collaboration — the single strongest correlation with AI success in the survey.

Why this mistake is structural: AI projects without a named business owner run out of political fuel before they run out of budget. When the first operational disruption hits, there is no one with the authority to absorb the short-term adoption friction in exchange for the long-term ROI. The use case quietly returns to the pilot drawer, labelled "promising but not ready."

Mistake 6: Scaling Without Measuring Decision-to-Action Time

The metrics most CIOs currently track for AI — uptime, inference latency, token cost, model accuracy — measure the technology. They do not measure what matters commercially: how fast the organization converts an AI insight into a business action.

Netskope's January 2026 survey found that only 38% of I&O leaders believe their existing infrastructure can handle the demands AI is placing on it, and 67% of infrastructure executives report being excluded from key decision-making conversations entirely.

That exclusion is the problem. Infrastructure leaders know where decision latency hides. They are not being asked.

Why this mistake is structural: Dashboards and observability tools measure that a model ran. They do not measure whether the output moved a decision closer to execution. In the use cases Gartner identified as fully delivering ROI, the infrastructure is connected to a measurable business outcome. In the use cases that fail, the infrastructure reports its own health and nothing else.

At a Glance: The Six Mistakes, Their Cost, and the Fix

What High-Performers Do Differently

Across the twelve sources synthesized here, four practices consistently separate organizations capturing measurable AI ROI from those that are not.

- They redesign workflows first, select tools second. McKinsey's 2x ROI differential for workflow-first organizations is the most reliable predictor in the data.

- They secure executive sponsorship before funding a use case. Gartner's finding that full executive support correlates with use case success has held across three consecutive annual surveys.

- They assign a business-side owner with P&L accountability to every use case. The CIO owns enablement and reliability. The business owner owns outcomes and adoption. Without both, the use case drifts.

- They measure decision-to-action time, not just model performance. The organizations delivering ROI have explicit telemetry on the time between an AI-generated signal and a completed business action. The organizations failing at ROI cannot tell you what that number is.

What This Means for CIOs and CTOs in 2026

Three things are changing fast in how AI infrastructure gets funded, defended, and governed.

FinOps is no longer optional. With IDC projecting a 30% cost underestimate and probabilistic usage replacing predictable consumption, continuous financial visibility on AI infrastructure has moved from nice-to-have to mandatory. Budget conversations that happen annually cannot track spending that scales hourly.

CIO political capital is converging on a single metric: ROAI. CIO.com's recent analysis frames it bluntly: boards are no longer asking whether AI works. They are asking who owns the return on AI. Traditional CIO metrics — uptime, modernization, delivery velocity remain necessary but are no longer sufficient.

Governance is becoming an infrastructure requirement, not a project overlay. As the EU AI Act and sector-specific frameworks come into force, explainability, lineage, and audit trails have to be built into the infrastructure layer itself. Retrofitting them later is the most expensive path.

The Finzarc View

The research points to one conclusion, repeatedly, from every angle: the bottleneck is not the model. It is the system around the model. Most organizations do not have an AI problem. They have an execution problem. They already have models, dashboards, reports, and automation tools. What they do not have is an execution layer that connects insight to action inside the same workflow. Decisions get stuck in approvals. Ownership is unclear. AI stays advisory instead of becoming operational.

The organizations capturing ROI are not winning because of better models. They are winning because they redesigned how decisions move.

Do not add more tools. Fix how decisions move.

That principle does not get easier as infrastructure budgets grow. It gets harder. Every additional dollar spent on compute without redesigning the execution layer makes the ROI gap harder to close.

Conclusion

The 2026 research is consistent. Enterprise AI investment is rising. Enterprise AI ROI is not. The companies seeing returns are not doing anything magical. They are doing the boring work most teams keep postponing: redesigning workflows, assigning P&L owners, investing in data infrastructure, and measuring decision-to-action time.

Everyone else is paying for compute that does not convert.

Compute that runs but does not decide. Pilots that demo but do not ship. Dashboards that report but do not act.

The question for CIOs and CTOs going into the second half of 2026 is not whether to invest more in AI. The board has already decided that. The question is whether the infrastructure being built today is designed for experimentation or for execution.

If This Research Describes Your Current Reality

The six mistakes above are not hypothetical. They are the recurring patterns the data reveals across enterprise AI programs failing to deliver ROI in 2026.

If any of them describe what is happening inside your organization, the next question is not whether to invest more. It is where your current execution layer breaks.

We work through this directly with leadership teams in a focused working session around their current execution layer. You leave with a documented view of where your AI spend is converting to revenue and where it is not, mapped against the same six infrastructure points this research identifies.

If that conversation feels relevant to your business, get in touch directly.

Sources and Further Reading

- Gartner, AI Projects in I&O Stall Ahead of Meaningful ROI Returns, April 2026

- Menlo Ventures, 2025 The State of Generative AI in the Enterprise, December 2025

- IDC, via CIO, CIOs Will Underestimate AI Infrastructure Costs by 30%, December 2025

- McKinsey, State of AI 2025, November 2025

- MIT NANDA, GenAI Divide Study

- IBM Institute for Business Value, via CIO, AI Misfires Spur CEOs to Rethink Adoption

- Flexential, 2025 State of AI Infrastructure Report

- Netskope, via CIO Dive, Enterprise Infrastructure Still Not Ready for AI, January 2026

- Fujitsu, via CIO, Breaking the 5% ROI Ceiling: Why Enterprise AI Stalls at the Pilot Stage

- Harris Poll / Dataiku, via The Register, Only 28% of AI Infrastructure Projects Fully Pay Off

- TechTarget, AI Failure Examples: What Real-World Breakdowns Teach CIOs

- CIO.com, Why Enterprises Aren't Seeing AI ROI — and What CIOs Can Do About It

- CIO.com, AI Is No Longer Software. It's Enterprise Infrastructure

- CIO.com, A CIO's 5-Point Checklist to Drive Positive AI ROI

- Gartner MLOps enterprise operationalization forecast, via MLOps Developer Guide 2025